I would like to share a case with you. A case that got me thinking about the importance of good coding. Some time ago we made some changes to the website of a supplier of stoves and fireplaces. The website has a good amount of authority within their business and although the website ranked very well on the main product categories, individual products and product types didn’t come up in the search engines as often as we wanted.

The current website was mainly focused on company information with a separate part for the online product catalogue. We decided to create a structure with a major focus on the main product categories, a filter structure that would be optimized automatically for the product types and a better structure to increase indexation of all of the products within the online catalogue.

The goal of the changes was to drive more organic traffic from searches for specific products and types of products and of course to retain the current rankings on main categories. Together with the client we invested some valuable hours coming up with a perfect structure and tackled problems like optimizing pages where combinations of filters were used.

Because the structure would change significantly, the URLs also had to change. Therefore we chose at the same time to implement the cleanest URLs we could create. Of course we redirected all important old pages to their new locations to retain the rankings.

After a few weeks we completed the plan for the website and started building the new version. When it was completed we were confident it would mean a great improvement for the visibility in the SERPs for products and categories. And after the launch it quickly became clear that we were right: organic traffic focused on products and product types increased steadily.

After a few weeks we completed the plan for the website and started building the new version. When it was completed we were confident it would mean a great improvement for the visibility in the SERPs for products and categories. And after the launch it quickly became clear that we were right: organic traffic focused on products and product types increased steadily.

It took some time for the redirects to get picked up by the search engines, but after a while most of the new pages were indexed. To our surprise however the rankings for the main categories didn’t get passed on to the respective new pages. They dropped on average 30-40 positions, away from the first pages back into forgotten areas of the search engine index where day light hardly travels.

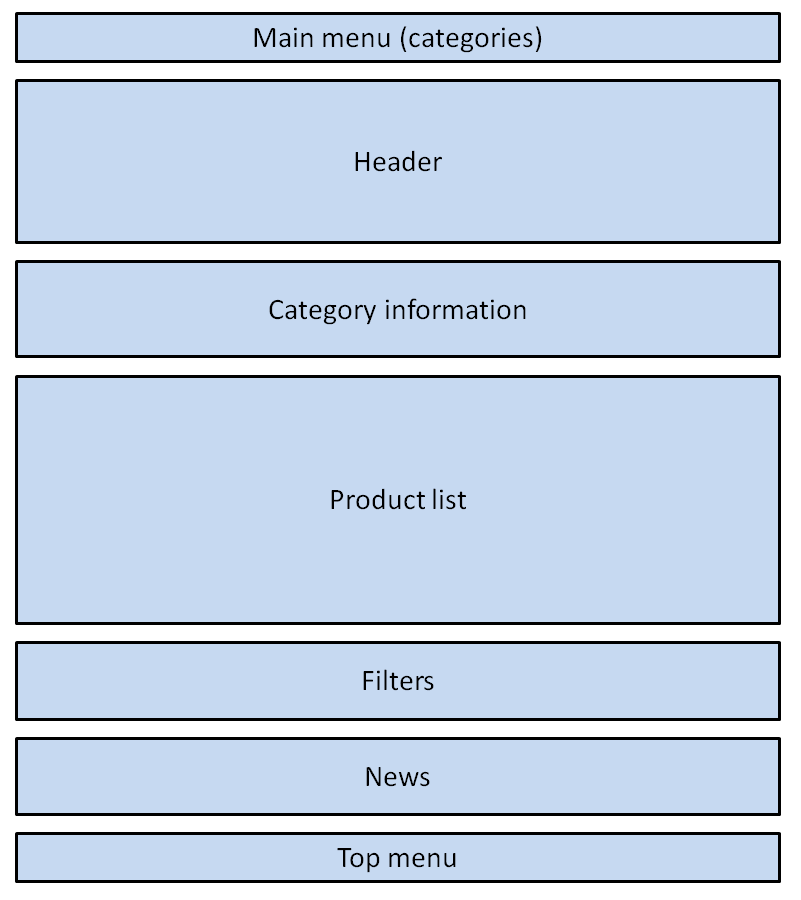

At first we couldn’t find a clear cause for this drop in the engines. But after an extensive analysis we thought there might have been something wrong with the way the category pages were built in the back-end. The structure of a page to a visitor was like this:

But after turning of CSS, and therefore showing the page as search engines see it, resulted in the following structure:

Well you can see the problem here. First of all, although all the necessary information is presented on the category page, the valuable information for search engines is presented at the bottom of the page.

Secondly, the most important pages on the website (the categories) are the last presented links on the page. Therefore search engines could view them as least significant for users.

Thirdly, because of the high position of the news section in the structure Google also presented the date of the top news item in the search result (this is also what triggered us to check the source code). Google might perceive the page as a news page instead of a regular content page.

We decided to make a few change within the code to present the information in the proper sequence. This resulted in the following structure:

This structure is clearly focused on the important content and important pages. So with eager anticipation we awaited the indexation of the new version of the page. A few hours later the new page was indexed and immediately the rankings arose from pages 3-4 tot the first SERP.

Now, the old rankings are restored almost completely and traffic is restoring as well. With the changes for the products and filters organic traffic is higher than ever before. And that without changing anything to the content or incoming links. Visitors can hardly notice the difference, but search engines apparently all the more.

So, although you might have a well structured page for your visitors, how search engines perceive it is also very important. Take a look at your site the way search engines do by disabling CSS, checking the text version of their cached page or use a tool like SEO browser.